Secure Vibe Coding

AI-assisted development with enterprise-grade security

Protect your development environment from prompt injection, data leaks, and unauthorized tool usage- while maintaining developer velocity across IDE, CLI, and MCP agents.

Trusted & Advised by leaders across

Why Secure AI Development?

Secrets embed in prompts and generated code

Developers paste API keys, tokens, and credentials into AI tools for debugging, creating immediate exposure risks across chat logs and code repositories.

Shadow AI usage explodes blind spots

Teams deploy unsanctioned plugins, extensions, and SaaS assistants outside IT oversight, fragmenting governance and making incident response nearly impossible.

MCP servers create uncontrolled data access

Model Context Protocol integrations allow AI agents direct access to organizational files, databases, and APIs without proper authentication or audit controls.

AI-generated code lacks security guarantees

LLMs produce syntactically correct code with silent vulnerabilities-missing input validation, insecure defaults, and outdated dependencies that slip past manual review.

Fragmented audit trails across platforms

AI workflows span chat interfaces, IDEs, MCP servers, and multiple SaaS platforms, creating visibility gaps that complicate forensics and compliance reporting.

Escalating prompt-based attacks

Prompt injection, credential extraction, and LLM manipulation are emerging threats—one compromised prompt can lead to data exfiltration or code execution.

How Lumeus Secures Vibe Coding

Complete security for AI coding agents without disrupting developer velocity or compromising innovation

AI Tool Discovery

Discovery

Complete observability into AI-assisted development workflows

- Detect all AI IDEs and CLIs – Comprehensive inventory of AI tools being used on developer machines

- Discover all MCP servers – Map data access patterns and exposure risks within AI development environments

- Map GitHub repository access – Track how AI tools interact with code repositories and identify data exposure risks

Posture Management

Inline guardrails enforced by the Agent-Native Policy Enforcement Point at the agent edge (IDE/CLI/MCP).

-

Govern GitHub access – Identity-based rules that control repository permissions and code interactions

-

Manage MCP server configurations – Centralized control ensuring consistent security posture across environments

-

Control risky IDE extensions – Centralized scanning and policy enforcement for developer tool installations

Runtime Protection

Real-time threat detection and blocking

- Secure access to resources – Native authentication directly from IDEs with zero-trust controls

- Protect MCP server usage – Intelligent proxying that monitors and controls data flows between AI tools and resources

-

Firewall agent-native code generation – Integrated threat detection and policy controls inline with AI code generation.

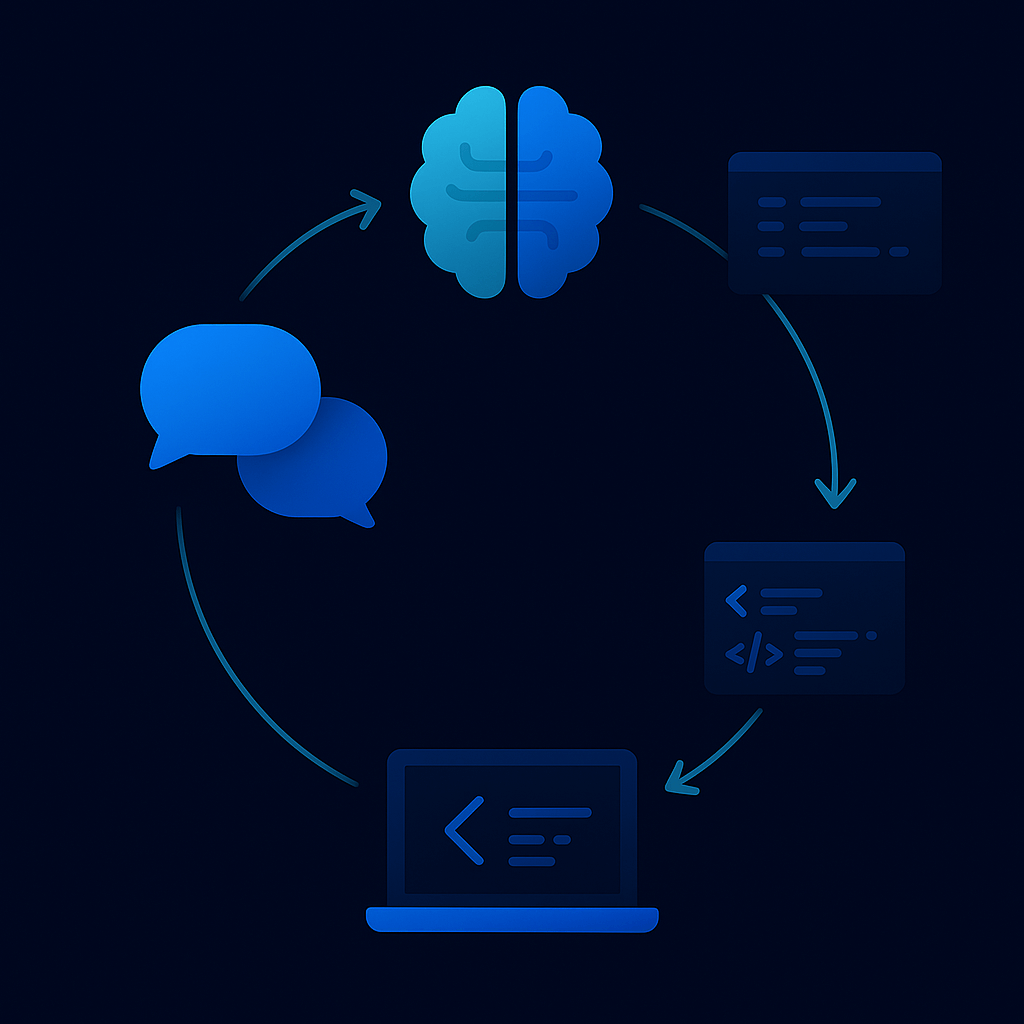

Secure AI Infrastructure in Action

See how organizations secure AI infrastructure access with identity-integrated controls

without compromising developer productivity or AI agent workflows.

Built for Secure Vibe Coding

FOR DEVELOPERS

Code with confidence, ship with speed

Native integrations with popular AI coding platforms to maintain development speed while enforcing security policies automatically.

Automated safeguards

Inline DLP prevents credential exposure and sensitive data leaks in prompts or generated code without manual security checks.

Compliance-ready delivery by default

Focus on features while ensuring every AI interaction meets enterprise security through automated policy enforcement.

FOR IT TEAMS

Plugin deployment with native MDM tools

Push and manage Lumeus across developer machines using existing mobile device management infrastructure.

Centralized rollbacks on existing IDEs

Quickly revert problematic AI tool configurations across all development environments from a single console.

Visibility for IT operations

Real-time organizational mapping of AI tool adoption, performance metrics, and resource utilization for capacity planning.

FOR SECURITY TEAMS

Posture management and runtime security

Monitoring of AI development environments with real-time threat detection for vulnerable code before production deployment.

Secure remote access management

Zero-trust authentication for AI agents accessing Kubernetes, SSH, and Jupyter notebooks with ephemeral access controls.

Block vulnerable AI-generated code

Automated scanning and blocking of insecure patterns, outdated dependencies, and prompt injection attempts across all AI interactions.